Toulo-useR!

Eran Raviv

July 11, 2019

Three parts to this talk

- On the

ForecastCombpackage - Other things combinations

- How the

ForecastCombcame to be

Part 1

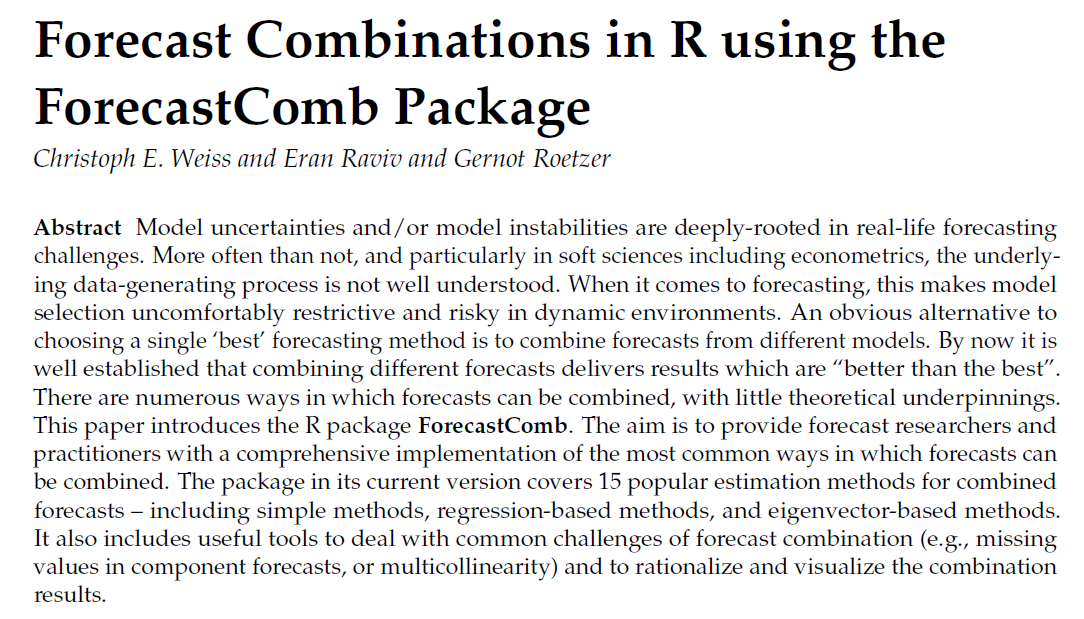

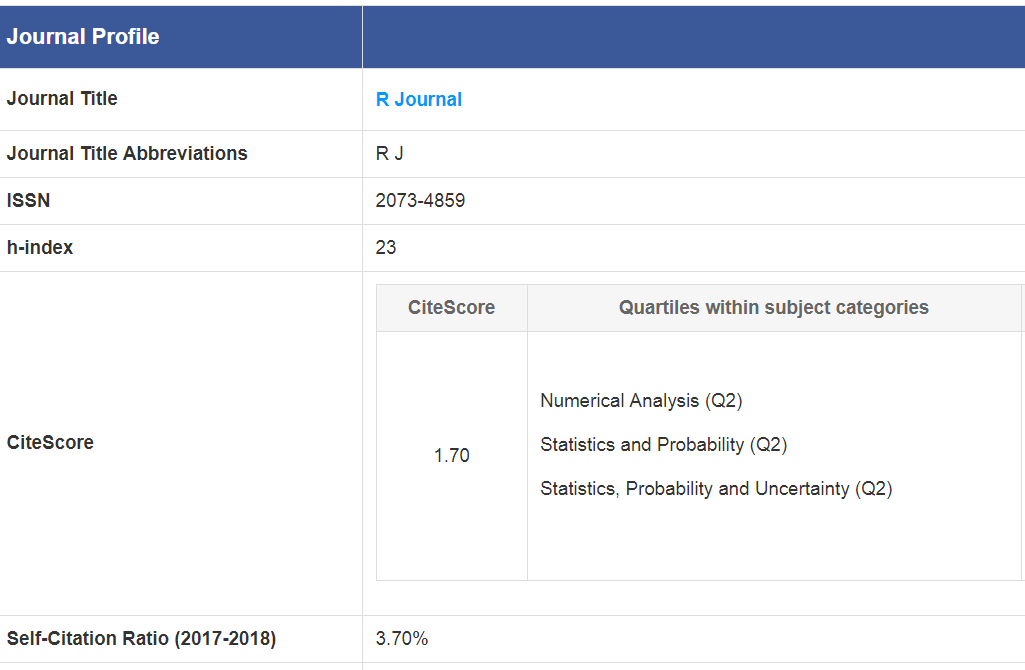

The ForecastComb package

Forecast Combinations in our Context

- What is it: \(f^{combined} = \frac{\sum_{i = 1}^P f_i }{P}\)

- Why is it: Because it works

- And why is that: More research is needed

- Intuition: Biases and Model risk

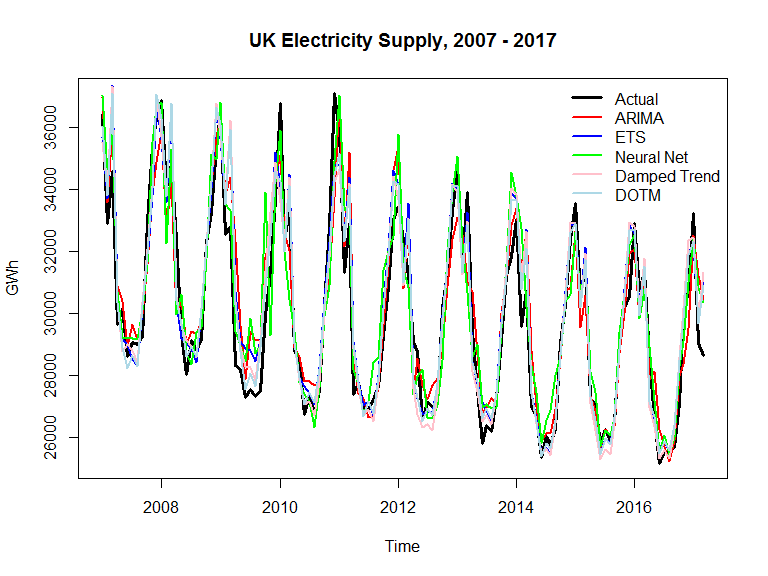

An example

Data preparation

Individual performance

| Train | Test | |

|---|---|---|

| arima | 1.24 | 0.99 |

| ets | 1.17 | 0.87 |

| nnet | 1.27 | 0.98 |

| dampedt | 1.18 | 0.92 |

| dotm | 1.04 | 0.77 |

“…combining multiple forecasts leads to increased forecast accuracy. In many cases one can make dramatic performance improvements by simply averaging the forecasts.” (source: Forecasting: Principles and Practice. Rob J Hyndman and George Athanasopoulos)

OLS combination

\[ y_t = {\alpha} + \sum_{i = 1}^P {\beta_i} f_{i,t} +\varepsilon_t.\]

The combined forecast is then given by:

\[f^{comb} = \widehat{\alpha} + \sum_{i = 1}^P \widehat{\beta}_i f_i.\]

Simple combination

Does not outperform the best model:

| Train | Test | |

|---|---|---|

| arima | 1.24 | 0.99 |

| ets | 1.17 | 0.87 |

| nnet | 1.27 | 0.98 |

| dampedt | 1.18 | 0.92 |

| dotm | 1.04 | 0.77 |

> names(SA)

> 1 Method

> 2 Models

> 3 Fitted

> 4 Accuracy_Train

> 5 Input_Data

> 6 Weights

> 7 Forecasts_Test

> 8 Accuracy_Test> [1] "0.7823"OLS combination

Indeed outperforms the best model:

| Train | Test | |

|---|---|---|

| arima | 1.24 | 0.99 |

| ets | 1.17 | 0.87 |

| nnet | 1.27 | 0.98 |

| dampedt | 1.18 | 0.92 |

| dotm | 1.04 | 0.77 |

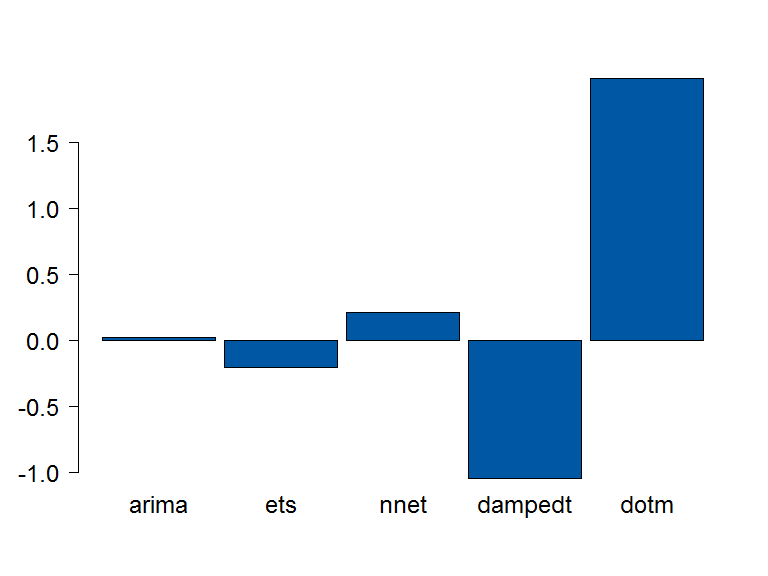

OLS Weights

A linear combination of couple of models

How come it works?

What can the ForecastComb package do for you?

- Trivial

- Simple. Median. Trimming.

- Accuracy-based

- (Inverse) Rank. (Inverse) RMSE. Eigenvector approach.

(Cheng Hsiao and Shui Ki Wan. Is there an optimal forecast combination? JOE, 178:294-309, 2014)

What can the ForecastComb package do for you? (cont’d)

- Regression based methods

- OLS, LAD, CLS

- Complete subset regressions

- Structure

- Plotting, summary and predict methods

Part 2

Other things combination

Many widely used statistical techniques implicitly use forecast combination

- Bagging

- Random Forest

- Moving average

- Shrinkage

- Inception blocks in ConNN

$$ D_t = (1-\lambda) \sum_{t=1}^ \infty \lambda^{t-1} (\varepsilon_{t-1}\varepsilon^ \prime_{t-1}) = (1-\lambda)(\varepsilon_{t}\varepsilon^ \prime_{t})+\lambda D_{t-1} $$

$$ \Sigma_{Combined}= \alpha \Sigma_{1} + (1-\alpha) \Sigma_{2} $$

For time series

- Across different windows

- Across forecasting horizon

- Rectify

Recursive and direct multi-step forecasting: the best of both worlds

- For hierarchical time series

- Across information criteria

The weighted average information criterion for multivariate regression model selection

For densities/probability

- For densities from VAR

- More generally

- For probability forecasts

- For VaR

Even more

- In factor models

- Considering outliers

- Across quantiles

- Now on my to read list:

Simplicity makes me happy (Alicia Keys)

- "empirical studies have shown that such a simple equally weighted pooling of forecasts performs quite well in practice, relative to other approaches that rely on estimated combination weights, a phenomenon dubbed the forecast combination puzzle."

-

"One of the puzzles for forecast combination is the documentation of simple average (or equally weighted combination) dominating more sophisticated forecast combinations (e.g.?Huang and Lee (2010), Palm and Zellner (1992) and Stock and Watson (2004))."

(Cheng Hsiao and Shui Ki Wan. Is there an optimal forecast combination? JOE, 178:294-309, 2014)

- Intuitive explanation here:

Still more coming..

Not sure how much more mileage out of this

Part 3

A package is born

PPT at work

Code and manual

Then an email

…

Partners in crime

Chris E Weiss

Gernot Roetzer

Yours truly

Yet we have yet to meet

Collaboration and hard work

Finally..