Bootstrapping in its general form (“ordinary” bootstrap) relies on IID observations which staples the theory backing it. However, time series are a different animal and bootstrapping time series requires somewhat different procedure to preserve dependency structure.

In what follows I use the Misery index as the time series for demonstration. Inflation and unemployment numbers concerning the Eurozone (17 countries) can be found here. In order to bootstrap time series, here are two things you can do:

1. Bootstrap based on IID innovations

The idea is to estimate the model, and then use the residuals that are, by construction, close to being independent. Bootstrap these residuals and “back out” the observations using your estimated parameters. Like so:

options(digits = 4) ; library(tseries)

y = ts(read.table("/mindex1.txt",sep = "\t",header = F)[,2] ,frequency = 12, start = c(1995,6) )

par(mfrow=c(1,1))

plot(y, main = "Misery Index over Time", ylab = "Index", lwd = 2,ty = "b",cex.main = 2)

# Stationary?

adf.test(y)

kpss.test(y)

#Looks like it.

######## Bootstrap based on IID innovations ##########

numlags = 12 # choose the model..

n = length(y)

ar1 = ar.ols(y, demean = F,intercept=T, order.max = numlags,aic = F) # standard OLS

plot(ar1$res)

scaled.res = scale(ar1$res) # that is important for the theory to hold.

summ(scaled.res) # see Code category to get this "summ" function

b1 = y[1:numlags] # Naturally the first few residual does not exist so we replace them with 0. So the bootstrap vale is the same as the original

R = 100 # how many bootstrap samples do you want?

res.star = matrix(nrow = (n-numlags),ncol = R)

obs.star = matrix(nrow = n,ncol = R) # will hold the bootstrapped series

for (i in 1:R){

res.star[,i] = sample(na.omit(scaled.res),(n-numlags), replace = T)

obs.star[1:numlags,i] = b1 # for the first obs we plug the original data

for (j in (numlags+1):n){

obs.star[j,i] = ar1$x.intercept + ar1$ar%*%obs.star[(j-1):(j-numlags),i] + res.star[(j-numlags),i]

}}

# Sanity check:

plot(obs.star[,"Pick random realization"], ty = "l")

plot(y, ty = "l", main = "Original")

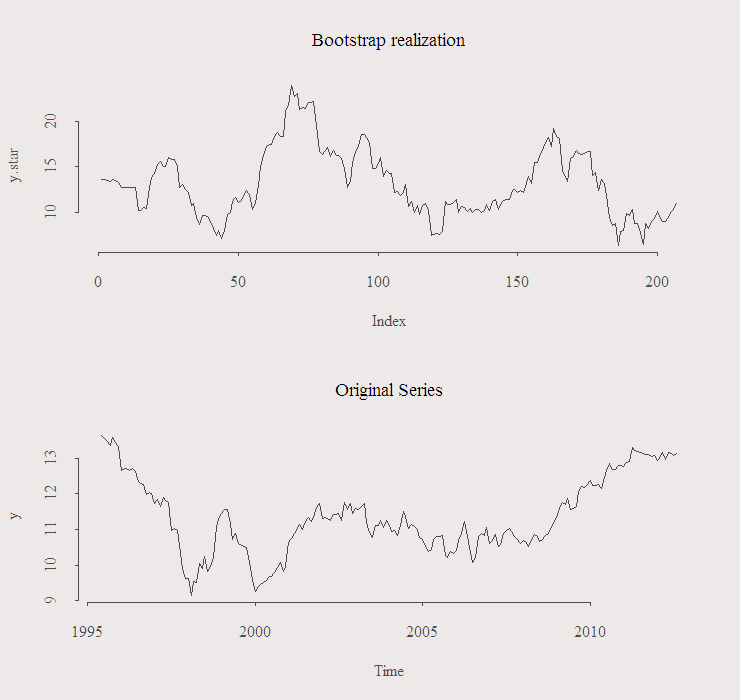

This is an example of one realization:

As you can see the variance of the realization seems a bit high compared with the original. For a real application I would try to get something closer to real series, this can be achieved via:

## We use a built in function to simulate from our model.

## Note the reduced variance I request: sd = sd(y)/2.

#the value 2 is arbitrary, we just want lower variance

arima.sim(list(order = c(numlags,0,0),

ar =ar.ols(y, demean = T,intercept=F, order.max = numlags,aic = F)$ar ),

n = n, sd = sd(y)/2 )

That’s that for the first option, quite simple but..

– you need to specify a model so that you can obtain the residuals.

– might be that even after “scaled.res = scale(ar1$res)” IID assumption is still false, which brings us to the second option:

2. Block Bootstrap (or MBB for moving block bootstrapping)

Essentially, we cannot sample the data directly because we lose the dependency structure. Solution is to sample whole blocks and concatenate them, in contrast to a single observation at a time.

Good idea yet with its own issues. For example, what’s best block size? too small or too large block size will fail to properly replicate the behavior of the original series and will mess up inference. Are blocks allowed to overlap? How boundaries should be treated, where there are no observation left to “complete” the block. For more details:

Resampling Methods for Dependent Data

Here I show the Politis and Romano Idea (1994) AKA stationary bootstrap, applicable to stationary and weakly dependent data. Blocks are of a random size. Block size is a random variable in itself coming from a geometric distribution with some expected block size which should be determined by you.

There is more than one way to code this, I think the following is the easiest:

acf(as.numeric(y)) # have a look to determine appropriate block size

gprob = 1/20 # average Block size 14 months

R = 100

ystar=matrix(nrow = n,ncol = R)

for (r in 1:R){

loc = round(runif(1,1,n)) # loc for location

for (i in 1:n){

g1 = runif(1,0,1)

# In probability gprob, we take next observation, otherwise we start a new block

if (g1>gprob) {loc = loc+1} else {loc = round(runif(1,1,n))}

if (loc>n) loc = loc-n # wrap the serie as a circule

ystar[i,r] = y[loc]

}}

plot(ystar[,"Pick random realization"], ty = "l")

plot(y,ty = "l")

Notes:

1. As usual, there are packages out there and while I did dive in the code of function “tsboot”, it’s hard to figure out the subject without coding it, and even harder (impossible) to customize it to do what you need (also as usual). You don’t need to trust me on this one, type “page(tsboot)“ and have a look. “boot” is an excellent package, but as such, very general.

2. Here I demonstrated the methods using AR(p) models, but it is of course applicable to whatever model you can generate residuals from.

Resources:

[asa mytpl]0387988548[/asa]

[asa mytpl]3540262393[/asa]

Hello,

Your article is interesting, but i have one question especially for the stationnary bootstrap, the choose of block length is crucial how do you determine it just by using acf?

Regards

For the stationary bootstrap we need only the AVERAGE block size, so it is (somewhat) less crucial. Similar issue can be found when determining the window size in smoothing procedures. Possible solution is to tailor the choice to what you need using data-driven methods, e.g., if you deal with forecasting, use a validation set and see which Block Length has best out-of-sample performance and use that for the test set.

Thank you very much for sharing this. I would like to point out that for the sieve bootstrap, the column-wise loop can be vectorized, resulting in much faster code:

res.star[,] <- sample(na.omit(scaled.res),(n-numlags)*R, replace = T)

obs.star[1:numlags,] <- b1 # for the first obs we plug the original data

for (j in (numlags+1):n)

obs.star[j,] <- ar1$x.intercept + ar1$ar%*%obs.star[(j-1):(j-numlags),,drop=FALSE] + res.star[(j-numlags),]

In your Sieve bootstrap you are determining the new AR(p) based on the first observations of the data. For that reason, wouldn’t it be wiser to simulate an AR(p) process that is longer, say length n + m instead of n, and then discard the first m observations so that the effect of the starting values disappears?

I write: “# Naturally the first few residual does not exist so we replace them with 0.” I think when working with financial time series, which are usually long enough, the impact of this decision is minimal. Though I admit that theoretically your suggestion seems better.

I would like to point out that there’s a problem with how you’re standardizing your residuals. scale(ar1$res) will generate a statistic which is not related to the magnitude of your data points. Ie. if you have large data points, your method will shrink your residuals enormously.

What you should be doing is simply centering the residuals around zero without standardizing them. This is simple to do, just write scale(ar1$res,scale=F).

You are right, thank you.