In the classical regime, when we have plenty of observations relative to what we need to estimate, we can rely on the sample covariance matrix as a faithful representation of the underlying covariance structure. However, in the high-dimensional settings common to modern data science – where the number of attributes/features $p$ is comparable to the number of observations $n$, the sample covariance matrix is a bad estimator. It is not merely noisy; it is misleading. The eigenvalues of such matrices undergo a predictable yet deceptive dispersion, inflating into “signals” that have no basis in reality. But, using some machinery from Random Matrix Theory is one way to correct for biases that high-dimensional data creates.

Tag: volatility

Correlation and correlation structure (10) – Inverse Covariance

The covariance matrix is central to many statistical methods. It tells us how variables move together, and its diagonal entries – variances – are very much our go-to measure of uncertainty. But the real action lives in its inverse. We call the inverse covariance matrix either the precision matrix or the concentration matrix. Where did these terms come from? I’ll now explain the origin of these terms and why the inverse of the covariance is named that way. I doubt this has kept you up at night, but I still think you’ll find it interesting.

Correlation and correlation structure (8) – the precision matrix

If you are reading this, you already know that the covariance matrix represents unconditional linear dependency between the variables. Far less mentioned is the bewitching fact that the elements of the inverse of the covariance matrix (i.e. the precision matrix) encode the conditional linear dependence between the variables. This post shows why that is the case. I start with the motivation to even discuss this, then the math, then some code.

Correlation and Correlation Structure (6) – Distance Correlation

While linear correlation (aka Pearson correlation) is by far the most common type of dependence measure there are few arguably better ways to characterize\estimate the degree of dependence between variables. This is a fascinating topic I keep coming back to. There is so much for a typical geek to appreciate: non-linear dependencies, should we consider the noise in the data or rather just focus on the underlying process, should we consider the whole distribution or just few moments.

In this post number 6 on correlation and correlation structure I share another dependency measure called “distance correlation”. It has been around for a while now (2009, see references). I provide just the intuition, since the math has little to do with the way distance correlation is computed, but rather with the theoretical justification for its practical legitimacy.

A New Parameterization of Correlation Matrices

In volatility modelling, a typical challenge is to keep the covariance matrix estimate valid, meaning (1) symmetric and (2) positive semi definite*. A new paper published in Econometrica (citing from the paper) “introduces a novel parametrization of the correlation matrix. The reparametrization facilitates modeling of correlation and covariance matrices by an unrestricted vector, where positive definiteness is an innate property” (emphasis mine). Econometrica is known to publish ground-breaking research, and you may wonder: what is the big deal in being able to reparametrise the correlation matrix?

Portfolio Construction Tilting towards Higher Moments

When you build your portfolio you must decide what is your risk profile. A pension fund’s risk profile is different than that of a hedge fund, which is different than that of a family office. Everyone’s goal is to maximize returns given the risk. Sinfully but commonly risk is defined as the variability in the portfolio, and so we feed our expected returns and expected risk to some optimization procedure in order to find the optimal portfolio weights. Risk serves as a decision variable. You choose the risk, and (hope to) get the returns.

A new paper from Kris Boudt, Dries Cornilly, Frederiek Van Hollee and Joeri Willems titled Algorithmic Portfolio Tilting to Harvest Higher Moment Gains makes good progress in terms of our definition of risk, and risk-return trade-off. They propose a quantified way in which you can adjust your portfolio to account not only for the variance, but also for higher moments, namely skewness and kurtosis. They do that in two steps. The first is to simply set your portfolio based on whichever approach you follow (e.g. minvol, equal risk contribution or other). In the second step you tilt the portfolio such that the higher moments are brought into focus and get the attention they deserve. This is done by deviating from the original optimization target so that higher moments are utility-improved: less variance, better skew and lower kurtosis.

Create own Recession Indicator using Mixture Models

Context

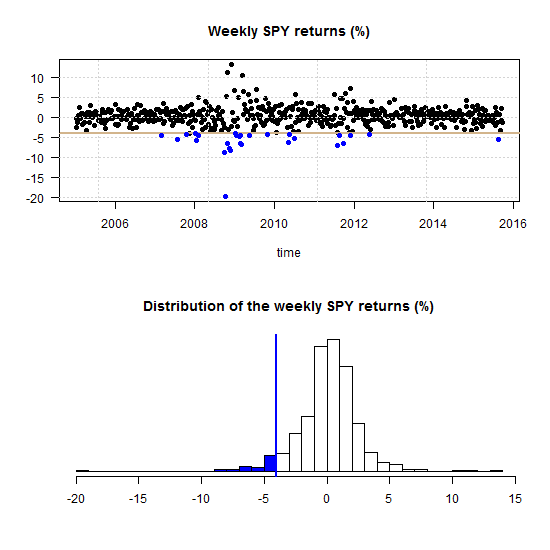

Broadly speaking, we can classify financial markets conditions into two categories: Bull and Bear. The first is a “todo bien” market, tranquil and generally upward sloping. The second describes a market with a downturn trend, usually more volatile. It is thought that those bull\bear terms originate from the way those animals supposedly attack. Bull thrusts its horns up while a bear swipe its paws down. At any given moment, we can only guess the state in which we are in, there is no way of telling really; simply because those two states don’t have a uniformly exact definitions. So basically we never actually observe a membership of an observation. In this post we are going to use (finite) mixture models to try and assign daily equity returns to their bull\bear subgroups. It is essentially an unsupervised clustering exercise. We will create our own recession indicator to help us quantify if the equity market is contracting or not. We use minimal inputs, nothing but equity return data. Starting with a short description of Finite Mixture Models and moving on to give a hands-on practical example.

Curse of dimensionality part 3: Higher-Order Comoments

Higher moments such as Skewness and Kurtosis are not as explored as they should be.

These moments are crucial for managing portfolio risk. At least as important as volatility, if not more. Skewness relates to asymmetry risk and Kurtosis relates to tail risk.

Despite their great importance, those higher moments enjoy only a small portion of attention compared with their lower more friendly moments: the mean and the variance. In my opinion, one reason for this may be the impossibility of estimating those moments, estimating them accurately that is.

It is yet another situation where Curse of Dimensonality rears its enchanting head (and an idea for a post is born..).

Multivariate Volatility Forecast Evaluation

The evaluation of volatility models is gracefully complicated by the fact that, unlike other time series, even the realization is not observable. Two researchers would never disagree about what was yesterday’s stock price, but they can easily disagree about what was yesterday’s stock volatility. Because we don’t observe volatility directly, each of us uses own proxy of choice. There are many ways to skin this cat (more on volatility proxy here).

In a previous post Univariate volatility forecast evaluation we considered common ways in which we can evaluate how good is our volatility model, dealing with one time-series at a time. But how do we evaluate, or compare two models in a multivariate settings, with two covariance matrices?

The case for Regime-Switching GARCH

GARCH models are very responsive in the sense that they allow the fit of the model to adjust rather quickly with incoming observations. However, this adjustment depends on the parameters of the model, and those may not be constant. Parameters’ estimation of a GARCH process is not as quick as those of say, simple regression, especially for a multivariate case. Because of that, I think, the literature on time-varying GARCH is not yet at its full speed. This post makes the point that there is a need for such a class of models. I demonstrate this by looking at the parameters of Threshold-GARCH model (aka GJR GARCH), before and after the 2008 crisis. In addition, you can learn how to make inference on GARCH parameters without relying on asymptotic normality, i.e. using bootstrap.

Multivariate volatility forecasting, part 6 – sparse estimation

First things first.

What do we mean by sparse estimation?

Sparse – thinly scattered or distributed; not thick or dense.

Multivariate volatility forecasting (5), Orthogonal GARCH

In multivariate volatility forecasting (4), we saw how to create a covariance matrix which is driven by few principal components, rather than a complete set of tickers. The advantages of using such factor volatility models are plentiful.

Correlation and correlation structure (3), estimate tail dependence using regression

Multivariate volatility forecasting (4), factor models

To be instructive, I always use very few tickers to describe how a method works (and this tutorial is no different). Most of the time is spent on methods that we can easily scale up. Even if exemplified using only say 3 tickers, a more realistic 100 or 500 is not an obstacle. But, is it really necessary to model the volatility of each ticker individually? No.

If we want to forecast the covariance matrix of all components in the Russell 2000 index we don’t leave much on the table if we model only a few underlying factors, much less than 2000.

Volatility factor models are one of those rare cases where the appeal is both theoretical and empirical. The idea is to create a few principal components and, under the reasonable assumption that they drive the bulk of comovement in the data, model those few components only.

Multivariate volatility forecasting (3), Exponentially weighted model

Broadly speaking, complex models can achieve great predictive accuracy. Nonetheless, a winner in a kaggle competition is required only to attach a code for the replication of the winning result. She is not required to teach anyone the built-in elements of his model which gives the specific edge over other competitors. In a corporation settings your manager and his manager and so forth MUST feel comfortable with the underlying model. Mumbling something like “This artificial-neural-network is obtained by using a grid search over a range of parameters and connection weights where the architecture itself is fixed beforehand…”, forget it!